Understanding the Differences Between Gemma 2 and LLama 3 for Optimal User Experience

When diving into the landscape of modern AI models, distinguishing between Gemma 2 and LLama 3 is crucial for ensuring you select the right tool for your needs. This guide will walk you through each model’s capabilities, advantages, and areas of application, highlighting the benefits and potential pitfalls. We'll start by addressing common pain points users face when choosing between these models and offer practical solutions to help you make an informed decision.

A primary concern users often grapple with is understanding the nuanced differences between Gemma 2 and LLama 3, particularly in terms of performance, flexibility, and cost. Both models promise advanced capabilities, but their specific implementations and suitability vary based on individual project requirements.

Quick Reference

Quick Reference

- Immediate Action: Identify your primary use case for AI—whether it’s text generation, language translation, or data analysis.

- Essential Tip: Utilize the demo versions of both models to understand their practical performance and capabilities.

- Common Mistake to Avoid: Selecting a model without considering scalability and future updates.

Detailed How-To: Choosing Between Gemma 2 and LLama 3

The process of selecting between Gemma 2 and LLama 3 involves understanding various aspects like performance benchmarks, feature sets, and cost structures. Below, we break down each component to provide you with a comprehensive guide.

Step 1: Define Your Requirements

Start by clearly defining what you need from an AI model. This includes:

- Performance Needs: Are you looking for speed, accuracy, or both?

- Budget Constraints: Do you have a fixed budget for deployment and maintenance?

- Scalability: Do you expect the project to grow in scope over time?

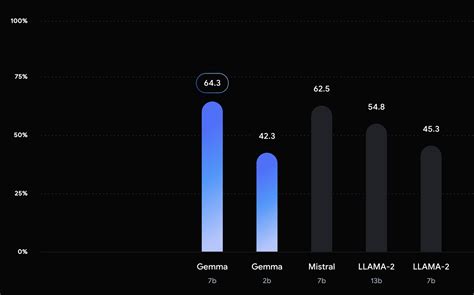

Step 2: Benchmark Performance

Next, look at performance benchmarks for both models. This can typically be found in detailed technical documents and user reviews.

For instance, Gemma 2 often excels in large-scale text generation tasks due to its advanced architecture, while LLama 3 might offer superior performance in nuanced language comprehension tasks.

You can also run your own tests by creating small datasets to evaluate each model's output. Here’s how:

- Create a dataset relevant to your use case.

- Run each model on the dataset and compare the results.

- Consider factors like execution time, accuracy, and output quality.

Step 3: Assess Feature Sets

Review the feature sets of both models:

- Gemma 2: It may include features like support for multilingual capabilities, advanced text-to-speech, and robust error correction.

- LLama 3: It could offer features like enhanced contextual understanding, superior machine translation, and superior sentiment analysis.

Step 4: Evaluate Cost Structures

Cost is a critical factor. Review the pricing models:

- Is it a one-time purchase, or does it involve ongoing subscription fees?

- Are there additional costs for high-volume data processing?

- Check if there are hidden fees for advanced features.

Step 5: Look at Community and Support

Community support and documentation can significantly ease the integration process:

- Visit the official documentation and forums to gauge community activity.

- Check for availability of tutorials, case studies, and community-contributed plugins.

Practical FAQ

What are the main differences in the architecture between Gemma 2 and LLama 3?

The architecture differences primarily lie in their neural network design and the way they process data:

- Gemma 2: Utilizes a transformer-based architecture with a larger context window, making it adept at handling long-form text data.

- LLama 3: Employs a more sophisticated attention mechanism and incorporates recurrent elements that may offer better handling of sequential data.

If your project requires extensive language understanding and complex context recognition, LLama 3 may provide an edge.

Can I use both models together?

While using both models together may require significant integration effort, it is technically feasible. This hybrid approach could offer a comprehensive solution where you leverage the strengths of both models.

- You might use Gemma 2 for large text generation tasks and LLama 3 for tasks requiring more nuanced language comprehension.

- For implementation, look into API integration tools that can combine the outputs of both models effectively.

This, however, requires careful planning and a clear understanding of the workflow to ensure seamless operation.

How do I determine if my project will benefit from either model?

To determine which model would best benefit your project, consider these practical steps:

- Start with small, manageable datasets to test both models in real scenarios.

- Analyze the results based on key performance indicators like speed, accuracy, and output relevance.

- Engage with community forums or seek professional advice for insights into best practices.

Keep in mind the long-term goals of your project. A model that aligns well with your initial tests often scales better over time.

By following this detailed guide, you’ll be equipped to make a well-informed choice between Gemma 2 and LLama 3, ensuring you select the model that best fits your current and future needs. This approach not only helps address immediate user pain points but also sets a foundation for scalable and effective AI integration.